After deciding on the types of interactions in the programming part, I realized the need to use machine learning techniques to map more complex gestures and analyze and compare them. I was looking for an external library that could help me with that so I could integrate all parts of the work into a single software. I came across ml.lib7, developed by Ali Momeni and Jamie Bullock for Max MSP and Pure Data, which is primarily based on the Gesture Recognition Toolkit8 by Nick Gillian.

Unfortunately, none of the objects from the package were able to load in Max MSP, and I encountered an error indicating that the object bundle executable could not be loaded. I also discovered that the creators had discontinued support and debugging for the library. However, it appears that the library still works on windows, both in Max MSP and Pure Data and on macOS, only for Pure Data.

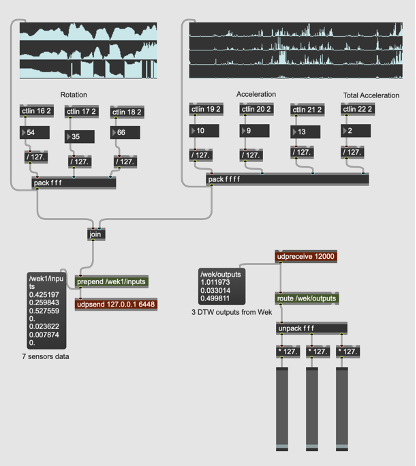

Since I had developed all the patches and processing parts in Max MSP on macOS, I decided to work with Wekinator, an open-source machine learning software created by Rebecca Fiebrink, which sends and receives data via OSC. In the early stages, I tried to [pack] all the sensor datas (3x rotations, 3x accelerations and 1x total acceleration) and send/receive them to/from Wekinator via the [udpsend] and [udpreceive] objects.

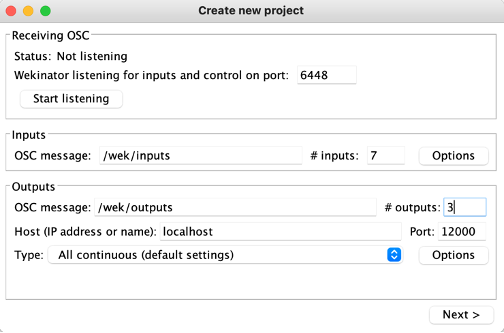

One important consideration, which is basic but necessary, is to use the same port number for the inputs. If running everything on the same computer, the localhost address is by default 6448. Another key point is that the message name used to send inputs from Max should match with the one in Wekinator e.g., /wek/inputs. The same considerations apply when receiving outputs from Wekinator. Another important factor is that Wekinator needs to know the number of inputs and outputs to properly configure the machine learning model. At this stage, I set it to 7 inputs and chose to receive 3 outputs from Wekinator.

Figure 1. Real-Time Data Exchange and Configuration Between Max MSP and Wekinator